How Princeton Can Save Young Minds from Themselves

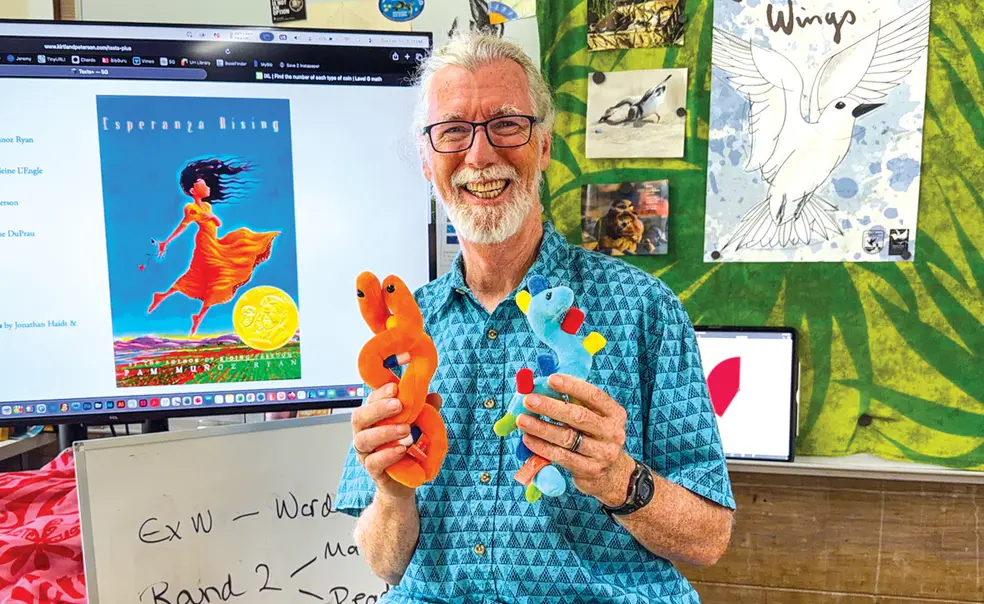

Kirtland C. Peterson ’82, a management consultant and clinical psychologist in previous lives, now teaches fifth grade on Oahu in Hawaii.

I was recently knocked on my teacher keester when a student handed in an AI-generated essay.

I am a change-of-career elementary teacher. The student in question was 11 years old.

As part of their preparation for an upcoming writing assessment, my fifth-grade students wrote an “exciting story” about an “unexpected animal encounter.”

In my pile of yellow exercise books — there between two typical, error-ridden writing assignments — was a glaringly perfect five-paragraph essay sporting a catchy introduction and pithy conclusion. This essay wasn’t perfect only because the student had not copied the AI-generated verbiage with enough care.

Confronted with the “A-plus” essay, the student lied, claiming it was all his own work. My withering British schoolmaster glare inspired a revision: “Mom helped me. A lot.” “MomGPT?” “Well …”

This student has tremendous scholarly potential. But if he can get away with offering AI work as his own, he will end up less educated, less fulfilled, with less to offer society. Like all my young students, this student needs a lot of writing practice. Practice may not make perfect, but it certainly facilitates progress.

Lying about your work and getting caught is one thing. Embarrassing, yes, but at least there’s a life lesson there. Lying about your work and getting away with it, however, is another matter altogether. It blocks students from reaching their potential, blunts practice effects and skill acquisition, develops a lying habit, and derails character development.

Protecting students from the above is not rocket science. It does, however, take full-blooded commitment and gads of energy from teachers and educational institutions.

Thus far, Princeton has left decisions on AI use in the classroom to individual faculty members. It is currently weighing a proposal to require proctoring for in-person examinations, a good start if adopted. Should it commit to a broad set of solutions and generate the institutional energy required to implement them, the University would model positive change for educational institutions at all levels.

The fixes are obvious. Yet obvious fixes are often not made thanks to apathy and inertia, resistance to change, torpidity, investment in the status quo, lack of imagination, and defeatism. Meaningful change requires energy and commitment for the long haul, on the part of a sufficiently large number of people.

Eliminate writing “homework.”

It used to be that work completed outside the classroom or lecture hall represented students’ work. In 2026 this is a dangerous assumption.

Thus, teachers and professors should eliminate all writing homework aimed at learning or assessment.

Hoping that AI detection software will save the day is a losing proposition as large language model (LLM) sophistication increases. But that doesn’t mean that homework needs to be abandoned.

Many assignments remain worthwhile: reading papers, articles, and books; watching online lectures and tutorials; preparing for tests and examinations; research; field work; and much more.

Princeton might go a step further, eliminating applicant essays. These have been suspect since long before LLMs entered the mainstream, as tutors, coaches, and parents have been known to take control of the keyboard from students.

Write in a controlled environment.

In the 1970s, I took my high school-level exams in a proctored hall. I wrote my essays by hand. This is a viable way to assess student knowledge and writing ability.

For those who cringe at the thought of suffering stacks of abysmal handwriting, there is hope. I took my clinical-psychology licensing examinations at a dedicated computer, at a specific testing location. I took nothing — could take nothing — into the exam. The computer had no internet connection. All written work was word-processed.

Permanent controlled testing rooms could be set up on university and high school campuses. Through relationships with established testing centers, students might have the option to take exams off campus. Students need not sit for an assessment all at the same time. Teachers and professors worried that early exam takers will share questions with other students can develop several equivalent exams.

If Princeton required that all to-be-graded writing be completed in a controlled testing environment, many other institutions would follow suit.

Princeton might also require applicants to provide writing samples in similar circumstances, perhaps asking students to write about difficult-to-prepare-for-in-advance topics. High school seniors’ writing skills would improve nationwide.

Common sense is required. Recent attempts to implement some of the above allowed students to use their own laptops. It doesn’t take a rocket scientist to figure out what happened.

I firmly believe Princeton has the intellectual heft — and streetwise smarts — to make a go of implementing a high-quality, self-correcting system of AI-free writing assessment.

Why has so little changed?

Much has been written and many hands wrung over the negative impact of AI in education, with some attention paid to the impact this has on learning, character development, and social mores.

So, why has there been so little effort to reduce the negative impact?

Sadly, it seems a great many would prefer to bemoan the impact of AI than do anything meaningful about it. If this is unfair, I apologize. I work in the trenches. This is what I see.

This lack of interest in meaningful change in response to AI-generated student work follows an older issue: teachers increasingly disinclined to provide detailed feedback for written work.

To wit, a friend in law school, at the very top of her class, once received a B-minus for work she knew was far better than that. She confronted the professor. He was honest enough to admit that he didn’t read student essays. Believe it or not — and it was hard to believe — he would toss student work from the top of his stairs, then provide a grade based on where the work landed. My friend did get her grade changed.

Fair or unfair, it seems that many teachers and institutions are relatively unconcerned about students handing in AI-generated work. Assessing written work is a time sink and an imposition, not a passionate commitment to educating young minds.

My fantasy is that Princeton, my alma mater, will roll out the big guns, deploy behind-the-lines operatives, and stop AI work poisoning serious student writing.

If Princeton is looking for a new front to be “in the nation’s service,” I can’t think of a more worthy one.

3 Responses

Alan M. Weinstein ’71

1 Week AgoIn Defense of the Honor Code

Seems like Mr. Peterson’s fifth grader touched a nerve. I’m not sure what can be expected from the ethical development of a 10-year-old, but college students should know what honesty is about.

In the university setting we should continue to rely on the honor system, and to advertise that as integral to our mission. Our students should know that in their academic world their teachers and peers expect them to act with integrity. This expectation carries over into the professions in which integrity is a sine qua non: scientists and engineers don’t falsify data; doctors don’t push for unnecessary treatments; fiduciary advisers don’t swindle.

Policing strategies to enforce honesty does no one any good, not the student, who is demeaned, nor the faculty whose time and effort are wasted. This is a dance that tramples student-faculty bonding. We can imagine escalating efforts that devolve into high-tech policing regimes in which all essays are put through plagiarism detectors, students are put through TSA-like screening on entry to exam rooms, and then are actively surveilled by AI-powered cameras programmed to spot cheaters.

For a path forward on this issue, I would urge that Princeton adhere to the 1967 AAUP Statement on Government of Colleges and Universities, which identifies the faculty as stewards of the academic enterprise. Modification to the honor code should not be imposed by an administration in response to alumni preference but should be the consensus product of faculty deliberation.

Dorina Amendola ’02

1 Week AgoPrioritize Character Development to Combat AI Cheating

In Dr. Peterson’s analysis of the problems of his lying student and dealing with increasing AI fraud in schools, character development was his last stated concern. This disturbs me. Is the lower quality writing of this future lying adult, or the lack of trustworthiness, the greater loss to himself and society — never mind scholarship? Poor, undereducated people with character always have more wealth than liars and cheats who get to rule over them. Some people still teach this. So what should we be protecting young minds from?

A cheating student is reacting to his teachers’ (including parents’) unspoken prioritization of values: material advancement, status, expediency, over immaterial goods that never used to need explanation, such as his soul. When the Honor Code was developed, Mark 8:36 was part of the fabric of society even if only as an ideal. Now, nothing not quantifiable has meaning. If that’s the case, does anything?

We have tried a great social experiment claiming that moral development can advance in a secular-materialist-quantifiable paradigm. I think this testifies otherwise. To truly combat illicit AI encroachment in the classroom, we must begin with renewing character formation in our kids. And … our adults?

Rachel Schwartz ’18

2 Weeks AgoOral Exams Could Combat AI Use

Another method could be transitioning to oral examinations and presentations with rigorous follow-up questions. Students would have to use their own words and be able to retain and recall (rather than look up) knowledge. This could also help prepare them for a world in which writing might unfortunately become devalued due to suspicions of AI use. Optimistically, this could lead to greater appreciation of face-to-face interaction in academic, professional, and social life.